The Rockset Kafka Connector is a Confluent-verified Gold Kafka connector sink plugin that takes every event in the topics being watched and sends it to a collection of documents in Rockset. Rockset is a serverless search and analytics engine that can continuously ingest data streams from Kafka without the need for a fixed schema and serve fast SQL queries on that data. Regardless of whether your data is coming from edge devices, on-premises datacenters, or cloud applications, you can integrate them with a self-managed Kafka cluster or with Confluent Cloud (/confluent-cloud), which provides serverless Kafka, mission-critical SLAs, consumption-based pricing, and zero efforts on your part to manage the cluster.Ĭomplementary to the Kafka ecosystem and Confluent Platform is Rockset, which likewise serves as a great fit for interactive analysis of event streaming data.

Kafka Connect acts as sink to consume the data in real time and ingest it into Rockset. What if mainframes, databases, logs, or sensor data are involved in your use case? The ingested data is stored in a Kafka topic. It enables easy, scalable, and reliable integration with all sources and sinks, as can seen through real-time Twitter feeds in our upcoming example. Kafka Connect is a core component in event streaming architecture. In addition, it is often used for smaller datasets (e.g., bank transactions) to ensure reliable messaging and processing with high availability, exactly once semantics, and zero data loss. Not only can Kafka be used for both real-time and batch applications, but it can also integrate with non-event-streaming communication paradigms like files, REST, and JDBC. Enterprise Service Bus (ESB) – Friends, Enemies or Frenemies? explains in more detail why many new integration architectures leverage Apache Kafka instead of legacy tools like RabbitMQ, ETL, and ESB. Kafka often acts as the core foundation of a modern integration layer. Among these are Confluent Schema Registry, which ensures the right message structure, and ksqlDB for continuous stream processing on data streams, such as filtering, transformations, and aggregations using simple SQL commands. Kafka’s ecosystem also includes other valuable components, which are used in most mission-critical projects. The Apache Kafka project includes two additional components: Kafka Connect for integration and Kafka Streams for stream processing.

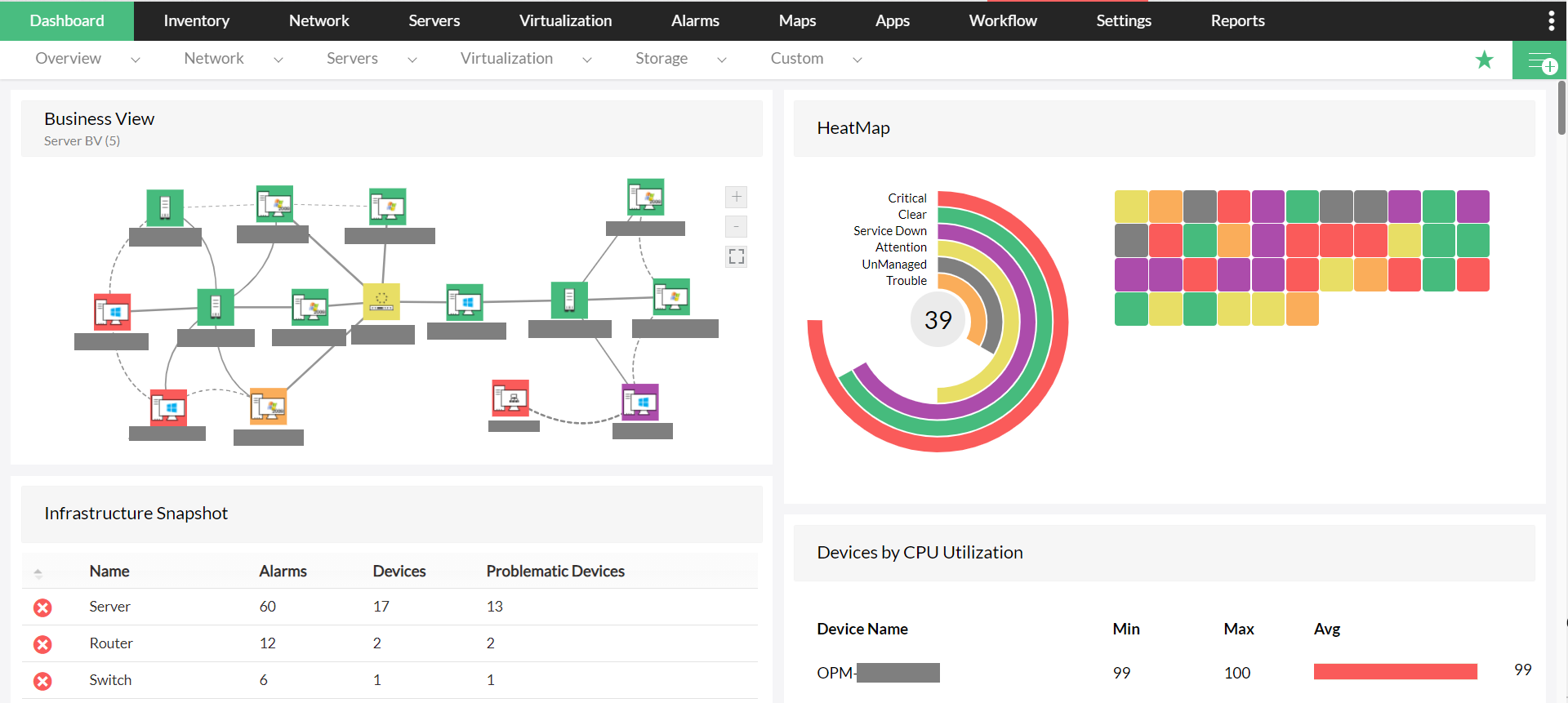

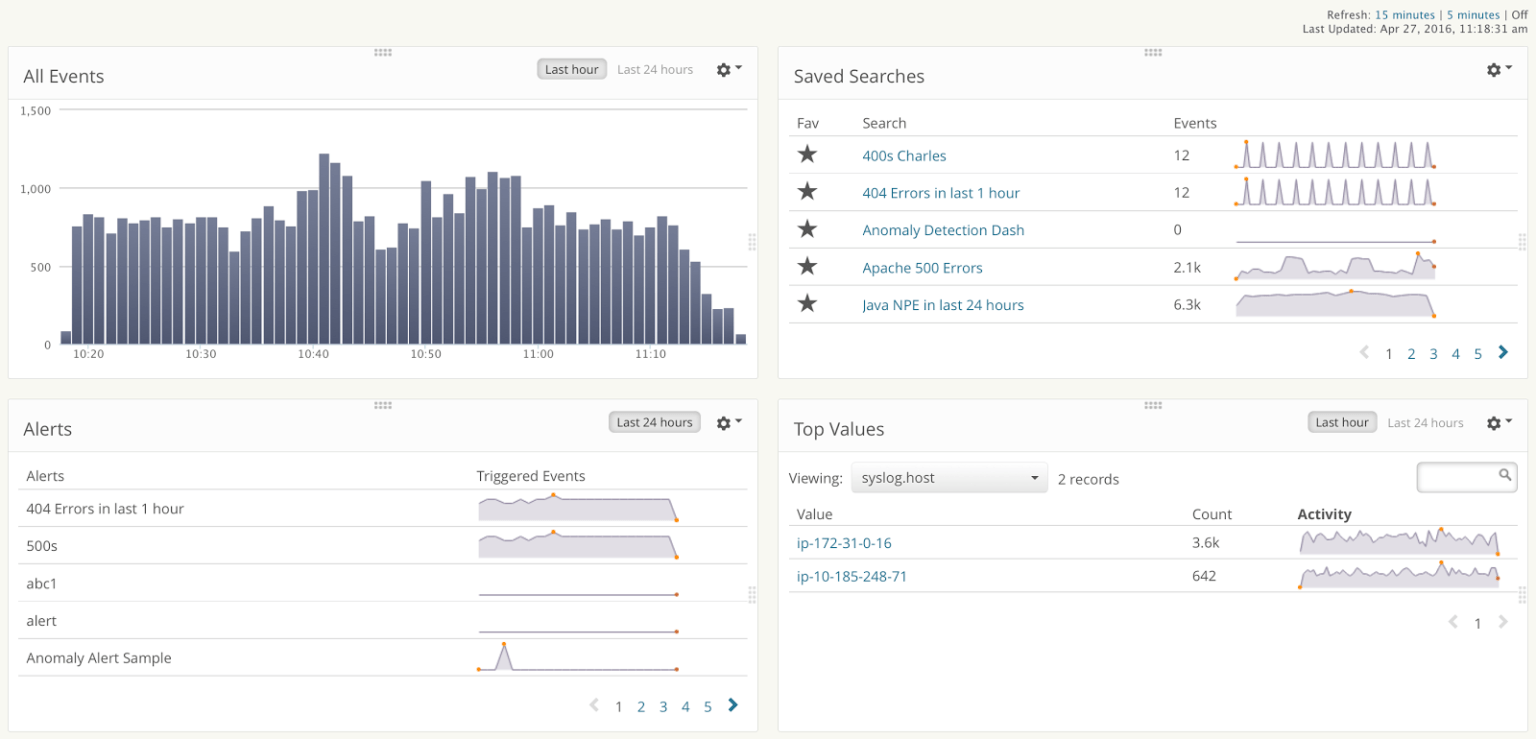

Apache Kafka as an event streaming platform for real-time analyticsĪpache Kafka is an event streaming platform that combines messages, storage, and data processing. Let’s now dig a little bit deeper into Kafka and Rockset for a concrete example of how to enable real-time interactive queries on large datasets, starting with Kafka. Some Kafka and Rockset users have also built real-time e-commerce applications, for example, using Rockset’s Java, Node.js ®, Go, and Python SDKs where an application can use SQL to query raw data coming from Kafka through an API (but that is a topic for another blog). Rockset supports JDBC and integrates with other SQL dashboards like Tableau, Grafana, and Apache Superset. Leveraging Rockset, a scalable SQL search and analytics engine based on RocksDB, and in conjunction with BI and analytics tools, we’ll examine a solution that performs interactive, real-time analytics on top of Apache Kafka and also show a live monitoring dashboard example with Redash. Batch processing and reports after minutes or even hours is not sufficient. In the most critical use cases, every seconds counts. Examples include microservice architectures, mainframe integration, instant payment, fraud detection, sensor analytics, real-time monitoring, and many more-driven by business value, which should always be a key driver from the start of each new Kafka project:Īccess to massive volumes of event streaming data through Kafka has sparked strong interest in interactive, real-time dashboards and analytics, with the idea being similar to what was built on top of batch frameworks like Hadoop in the past using Impala, Presto, or BigQuery: the user wants to ask questions and get answers quickly. The significant difference today is that companies use Apache Kafka as an event streaming platform for building mission-critical infrastructures and core operations platforms. However, Apache Kafka is more than just messaging. In the early days, many companies simply used Apache Kafka ® for data ingestion into Hadoop or another data lake.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed